Memory Optimization Emerges as Critical Factor in AI Infrastructure Economics

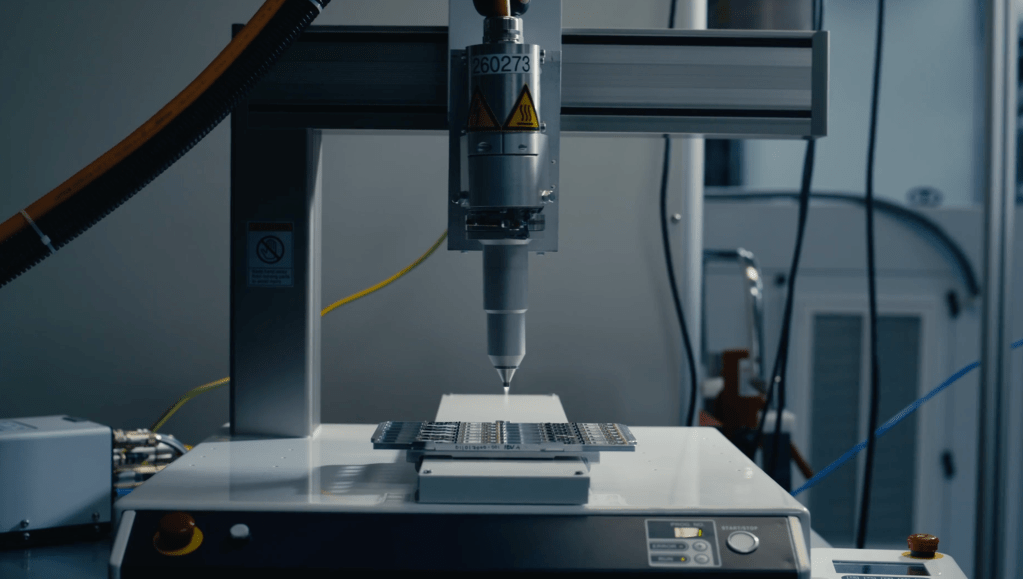

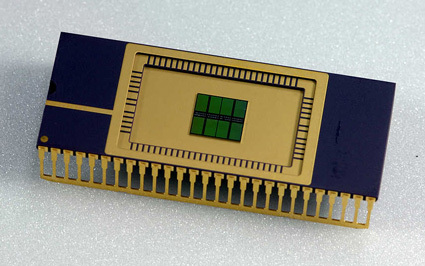

While discussions around AI infrastructure costs typically center on Nvidia GPUs, memory management is rapidly becoming a critical component of the equation. As hyperscalers deploy billions of dollars in new data center infrastructure, DRAM chip prices have surged approximately 7x over the past year, fundamentally altering the economics of AI deployment.

Simultaneously, a sophisticated discipline is emerging around memory orchestration—ensuring optimal data delivery to the appropriate agents at precisely the right time. Organizations that master this capability can execute identical queries with significantly reduced token consumption, potentially determining the difference between operational viability and failure.

Semiconductor analyst Dan O'Laughlin recently explored the critical role of memory chips on his Substack, featuring insights from Val Bercovici, Chief AI Officer at Weka. Their discussion, while hardware-focused, carries substantial implications for AI software architecture.

Bercovici highlighted the increasing complexity evident in Anthropic's prompt-caching documentation as a key indicator of this trend:

"The tell is if we go to Anthropic's prompt caching pricing page. It started off as a very simple page six or seven months ago, especially as Claude Code was launching—just 'use caching, it's cheaper.' Now it's an encyclopedia of advice on exactly how many cache writes to pre-buy. You've got 5-minute tiers, which are very common across the industry, or 1-hour tiers—and nothing above. That's a really important tell. Then of course you've got all sorts of arbitrage opportunities around the pricing for cache reads based on how many cache writes you've pre-purchased."

The core challenge involves determining cache retention duration for prompts: organizations can purchase 5-minute windows or invest in hour-long windows at premium rates. Retrieving data from cache is significantly more cost-effective, enabling substantial savings through proper management. However, each new data addition to a query may evict existing cached content, adding layers of complexity to optimization strategies.

Key takeaway: Memory management in AI models represents a fundamental competitive differentiator going forward. Organizations demonstrating excellence in this domain will gain significant advantages, and substantial innovation opportunities remain in this emerging field.

Previously covered initiatives include TensorMesh, a startup focused on cache-optimization at the stack level. Additional opportunities exist throughout the infrastructure stack:

• Lower stack: Optimizing utilization patterns across different memory types (DRAM vs. HBM) within data centers

• Higher stack: Structuring model swarms to maximize shared cache efficiency

As organizations refine memory orchestration capabilities, token consumption decreases and inference costs decline accordingly. Combined with improvements in per-token processing efficiency, these advances are driving down server costs substantially. This cost reduction trajectory will enable numerous AI applications currently considered economically unviable to achieve profitability.

Sources:

TrendForce DRAM Spot Price Data

Anthropic Prompt Caching Documentation

Ramp: AI Is Getting Cheaper

🔔 Stay tuned and subscribe →

Related news

Try these AI tools

On‑machine, open source AI agent with MCP extensions, recipes, LLM choice, and desktop/CLI for autom...

FrugalGPT cuts LLM costs via smart routing and fusion while maintaining or improving performance.