OpenAI Unveils GPT-5.3-Codex-Spark: Lightweight AI Coding Model Powered by Cerebras WSE-3 Chip

OpenAI has officially released GPT-5.3-Codex-Spark, a lightweight iteration of its agentic coding tool Codex, optimized for accelerated inference and real-time collaboration. The announcement marks a significant milestone in OpenAI's strategic partnership with hardware manufacturer Cerebras, representing deeper integration of specialized silicon into the company's infrastructure stack.

Architecture and Performance Optimization

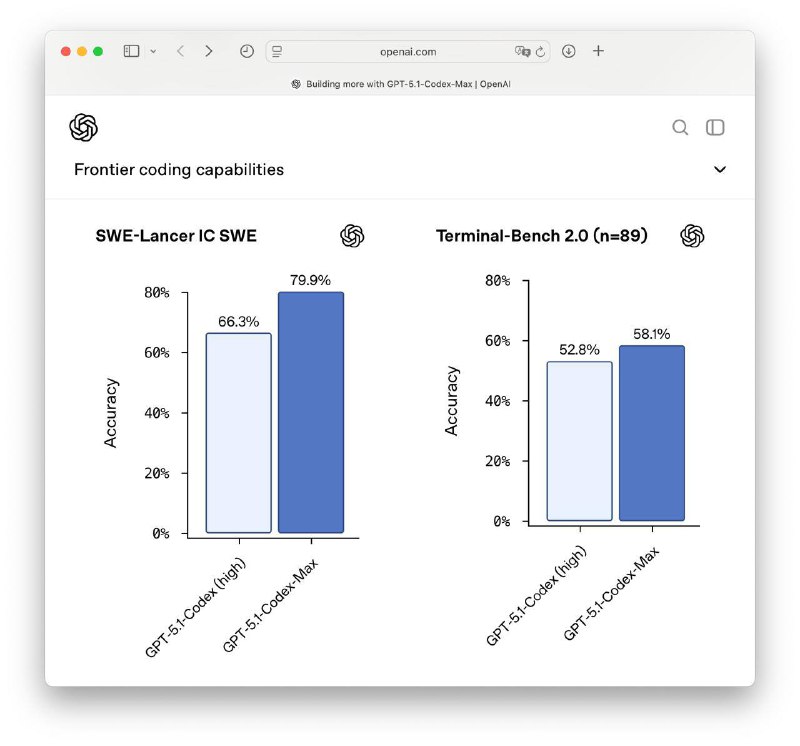

GPT-5.3-Codex-Spark is engineered as a streamlined version of the flagship GPT-5.3-Codex model launched earlier this month. The model is specifically designed for low-latency inference operations, targeting use cases that demand rapid prototyping and iterative development workflows rather than extended, compute-intensive tasks.

The inference pipeline is powered by Cerebras' Wafer Scale Engine 3 (WSE-3), a third-generation waferscale processor featuring 4 trillion transistors. This architectural choice enables the model to achieve substantially reduced response times, positioning Spark as what OpenAI describes as a "daily productivity driver" for developers.

Strategic Hardware Partnership

The deployment of Spark represents the first major deliverable from OpenAI's multi-year collaboration with Cerebras, a partnership valued at over $10 billion announced last month. According to OpenAI, integrating Cerebras compute infrastructure is central to enhancing AI responsiveness across their product ecosystem.

"Codex-Spark is the first step toward a Codex that works in two complementary modes: real-time collaboration when you want rapid iteration, and long-running tasks when you need deeper reasoning and execution," OpenAI stated in their official release.

Availability and Access

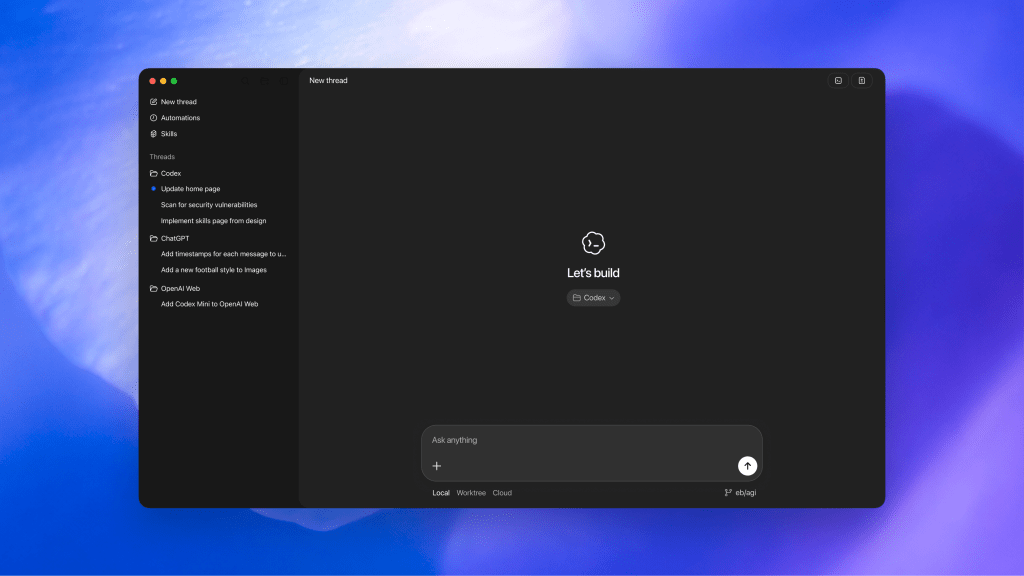

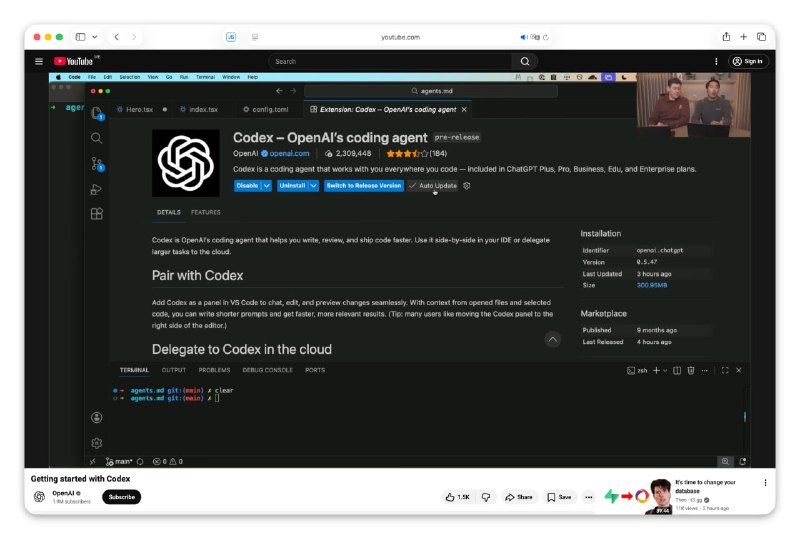

The model is currently available as a research preview exclusively for ChatGPT Pro subscribers within the Codex application. CEO Sam Altman teased the release on social media, noting that the new model "sparks joy" in his workflow.

Cerebras Market Position

Cerebras Systems, operational for over a decade, has experienced accelerated growth during the current AI infrastructure boom. The company recently secured $1 billion in Series H funding at a $23 billion valuation and has publicly indicated plans to pursue an initial public offering.

Sean Lie, CTO and co-founder of Cerebras, emphasized the collaborative potential: "What excites us most about GPT-5.3-Codex-Spark is partnering with OpenAI and the developer community to discover what fast inference makes possible — new interaction patterns, new use cases, and a fundamentally different model experience."

Technical Implications

OpenAI highlighted that Cerebras' waferscale architecture excels in scenarios requiring extremely low latency, making it particularly well-suited for interactive development environments where millisecond-level response times significantly impact developer experience and productivity.

🔔 Stay tuned and subscribe →

Related news

Read more on our blog

Try these AI tools

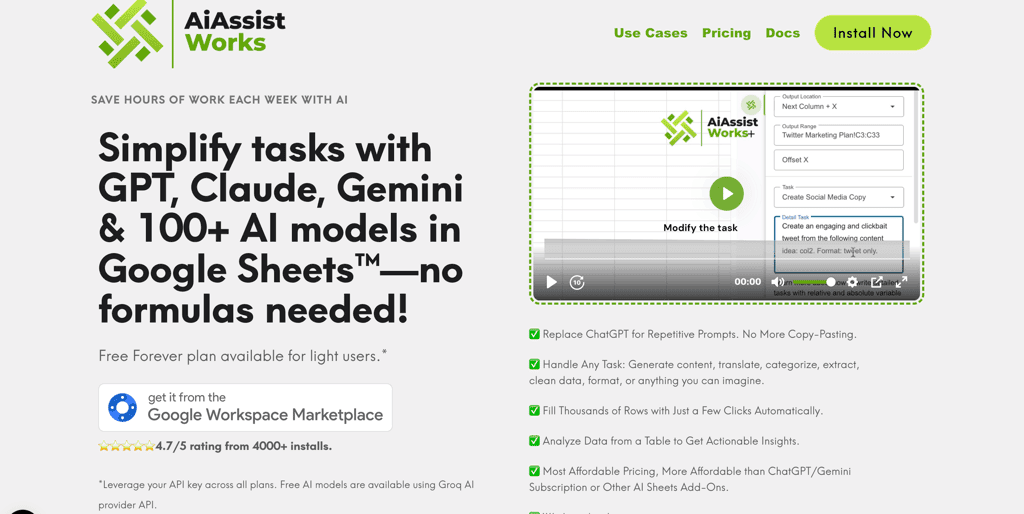

Optimize Google Sheets with AiAssistWorks: AI automation, data analysis, and content generation made...

Discover personalized show and movie recommendations with Watchthis.dev, powered by OpenAI and Verce...

Discover instant video summaries on YouTube with the ChatGPT for YouTube extension. Choose from free...