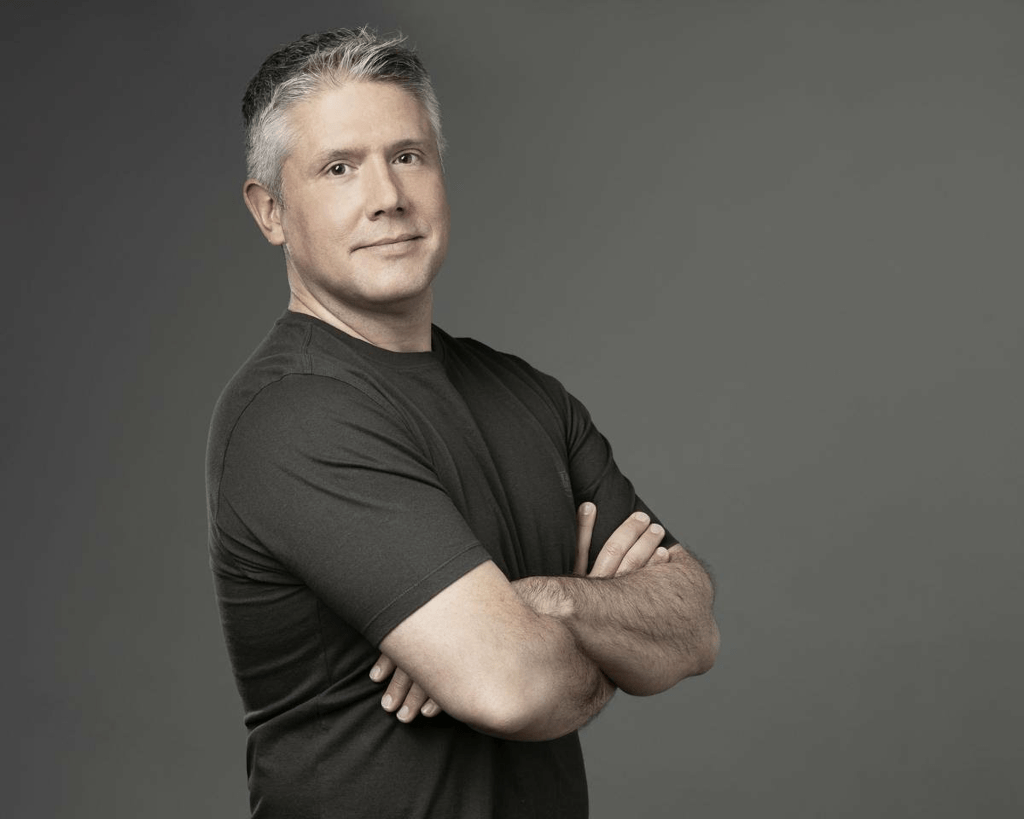

ElevenLabs CEO Envisions Voice as the Dominant AI Interface of the Future

ElevenLabs co-founder and CEO Mati Staniszewski has positioned voice technology as the next critical interface for artificial intelligence, predicting a fundamental shift in human-machine interaction as AI models evolve beyond text-based and screen-dependent paradigms.

Speaking at Web Summit in Doha, Staniszewski outlined how voice models developed by ElevenLabs have progressed beyond basic speech synthesis—including emotional nuance and intonation modeling—to now operating in conjunction with the reasoning capabilities of large language models (LLMs). This convergence, he argues, represents a transformative moment in technology interaction patterns.

"In the years ahead, hopefully all our phones will go back in our pockets, and we can immerse ourselves in the real world around us, with voice as the mechanism that controls technology," Staniszewski stated.

This strategic vision underpinned ElevenLabs's recent $500 million funding round at an $11 billion valuation, and reflects a broader industry consensus. Both OpenAI and Google have integrated voice capabilities as core components of their next-generation models, while Apple appears to be developing voice-adjacent, always-on technologies through strategic acquisitions such as Q.ai.

As AI deployment expands into wearables, automotive systems, and emerging hardware form factors, the control paradigm is shifting from tactile screen interactions toward voice-based commands, establishing voice as a critical competitive frontier in AI development.

Iconiq Capital general partner Seth Pierrepont corroborated this perspective at Web Summit, suggesting that while displays will retain relevance for gaming and entertainment applications, traditional input methods like keyboards are becoming increasingly obsolete. As AI systems develop more agentic capabilities, Pierrepont noted, interaction models will evolve with systems acquiring guardrails, integrations, and contextual awareness that reduce the need for explicit user prompting.

Staniszewski emphasized the significance of this agentic transformation. Rather than requiring detailed instruction sets, future voice systems will leverage persistent memory and accumulated context, enabling more natural interactions with reduced cognitive overhead for users.

This evolution will influence deployment architectures for voice models. While high-fidelity audio models have predominantly operated in cloud environments, Staniszewski revealed that ElevenLabs is developing a hybrid approach combining cloud and on-device processing. This architecture is designed to support emerging hardware platforms, including headphones and wearables, where voice functions as a continuous companion rather than a discretionary feature.

ElevenLabs has already established partnerships with Meta to integrate its voice technology across products including Instagram and Horizon Worlds, the company's virtual reality platform. Staniszewski expressed openness to extending this collaboration to Meta's Ray-Ban smart glasses as voice-driven interfaces proliferate across new form factors.

However, as voice technology becomes more pervasive and embedded in everyday hardware, it raises significant concerns regarding:

• Privacy protection mechanisms

• Surveillance implications

• Personal data retention policies

• Proximity to users' daily activities

These concerns are particularly relevant given previous allegations against companies like Google regarding voice assistant data practices, highlighting the need for robust privacy frameworks as voice-based AI systems become more integrated into consumer technology ecosystems.

🔔 Stay tuned and subscribe →

Related news

Try these AI tools

Experience AI-driven ADHD testing with personalized insights and comprehensive reports.

SmutGPT.ai: uncensored, customizable adult AI for erotic stories with privacy and flexible plans.

Transform PDF interactions with Documind’s GPT-4 Turbo-powered chat interface. Easy upload, query, a...